I currently have a 1 TiB NVMe drive that has been hovering at 100 GiB left for the past couple months. I’ve kept it down by deleting a game every couple weeks, but I would like to play something sometime, and I’m running out of games to delete if I need more space.

That’s why I’ve been thinking about upgrading to a 2 TiB drive, but I just saw an interesting forum thread about LVM cache. The promise of having the storage capacity of an HDD with (usually) the speed of an SSD seems very appealing, but is it actually as good as it seems to be?

And if it is possible, which software should be used? LVM cache seems like a decent option, but I’ve seen people say it’s slow. bcache is also sometimes mentioned, but apparently that one can be unreliable at times.

Beyond that, what method should be used? The Arch Wiki page for bcache mentions several options. Some only seem to cache writes, while some aim to keep the HDD idle as long as possible.

Also, does anyone run a setup like this themselves?

According to firelight I have 457 GiB in my home directory, 85 GiB of that is games, but I also have several virtual machines which take up about 100 GiB. The

/folder contains 38 GiB most of which is due to the nix store (15 GiB) and system libraries (/usris 22.5 GiB). I made a post about trying to figure out what was taking up storage 9 months ago. It’s probably time to try pruning docker again.EDIT:

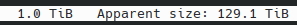

ncdusays I’ve stored 129.1 TiB lolEDIT 2: docker and podman are using about 100 GiB of images.

This would be the first thing I’d look into getting rid of.

Could these just be containers instead? What are they storing?

How large is your (I assume home-manager) closure? If this is 2-3 generations worth, that sounds about right.

That’s extremely large. Like, 2x of what you’d expect a typical system to have.

You should have a look at what’s using all that space using your system package manager.

If you’re on btrfs and have a non-trivial subvolume setup, you can’t just let

ncduloose on the root subvolume. You need to take a more principled approach.For assessing your actual working size, you need to ignore snapshots for instance as those are mostly the same extents as your “working set”.

You need to keep in mind that snapshots do themselves take up space too though, depending on how much you’ve deleted or written since taking the snapshot.

btduis a great tool to analyse space usage of a non-trivial btrfs setup in a probabilistic fashion. It’s not available in many distros but you have Nix and we have it of course ;)Snapshots are the #1 most likely cause for your space usage woes. Any space usage that you cannot explain using your working set is probably caused by them.

Also: Are you using transparent compression? IME it can reduce space usage of data that is similar to typical Nix store contents by about half.